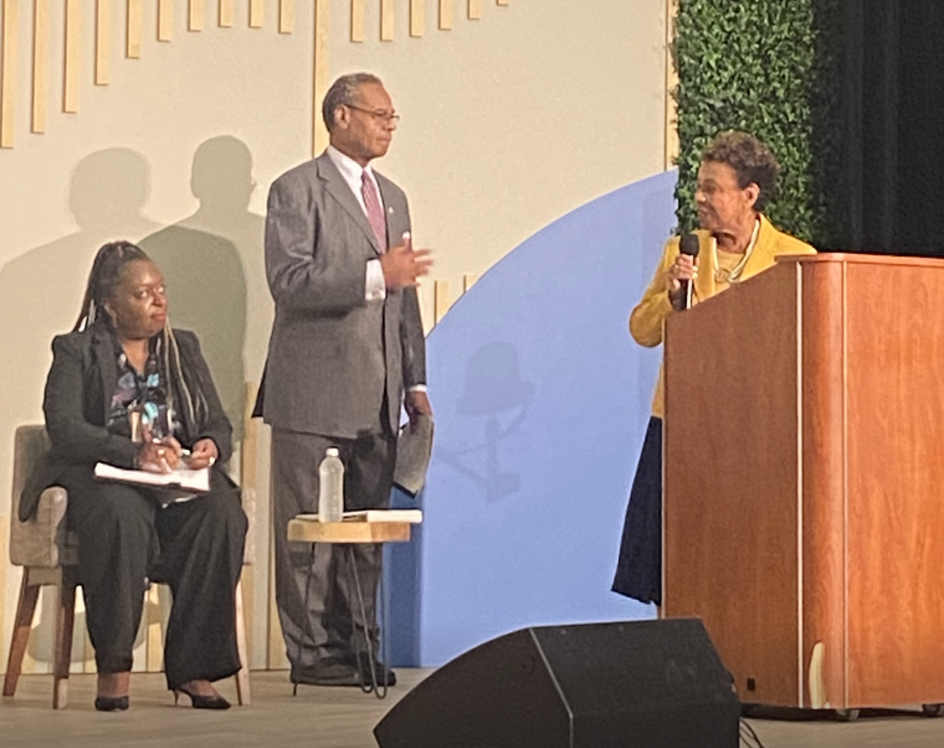

From left: Moderator Kimberly Bryant with Rep. Emanuel Cleave, II, and Rep. Barbara Lee, who co-hosted a panel dialogue on how AI impacts the Black neighborhood on the Congressional Black Caucus Annual Legislative Convention on Sept. 20, 2023, in Washington, D.C. | Supply: NewsOne

WASHINGTON – Making inroads on the bogus intelligence (AI) entrance has been a well-documented uphill battle to verify probably the most numerous voices inform the continuing dialog about pc techniques that depend on datasets to be taught patterns and make predictions.

One explicit concern is that Black individuals might be solely unnoticed until they’re concerned within the course of early on. However whereas tangible progress is being made on the aforementioned entrance, there’s nonetheless a far method to go.

“The influence on minority communities – particularly the Black neighborhood – shouldn’t be thought of till one thing goes flawed,” California Congresswoman Barbara Lee mentioned Wednesday.

Lee sounded the alarm whereas talking throughout a panel dialogue that she was co-hosting on the Congressional Black Caucus Annual Legislative Convention in Washington, D.C. It was one in every of a number of classes about AI deliberate throughout the week of coverage conferences within the nation’s capital about points impacting African People and the worldwide Black neighborhood, accentuating the significance of each the subject and the second.

“AI bias and discrimination are a direct results of an absence of range of the groups creating the fashions,” Lee continued earlier than emphasizing: “No range, no Black lens.”

She described the plight of inclusion in AI as “one other entrance on the civil rights spectrum,” a sentiment expressed by panelists who equally underscored the urgency to stage the enjoying discipline earlier than it’s too late.

Moderated by Kimberly Bryant of Black Ladies Code fame, the dialogue – entitled “Buddy or Foe How AI Impacts The Black Group” – additionally addressed the significance of investing in Black tech corporations and increasing Black communities’ footprints within the tech area.

Nonetheless, the overarching theme of how AI impacts Black people was paramount as consultants within the discipline supplied their ideas on the right way to transfer ahead.

Myaisha Hayes, the Marketing campaign Methods Director at MediaJustice, pointed to efforts by tech corporations to dodge accountability for a way AI impacts the Black neighborhood.

“A part of the rationale why we’re not additionally making progress is as a result of lots of these tech corporations sort of push this narrative that AI and expertise are simply too sophisticated to elucidate now,” Hayes mentioned earlier than explaining that’s simply “a speaking level to protect them from requires accountability.”

Pointing to her earlier expertise because the Nationwide Organizer on Felony Justice & Know-how at MediaJustice, Hayes burdened the absence of transparency in the case of AI and the way it can adversely have an effect on Black individuals.

Supply: FG Commerce / Getty

One explicit instance, Hayes mentioned, was within the prison justice system the place AI fashions are getting used to evaluate so-called pre-trial dangers and make predictions about whether or not individuals will seem in court docket. Hayes mentioned the info factors informing the algorithms, like zip codes and age, are coming from the authorized system and alleged to be an indicator of crime.

“They’re truly a sign of who’s probably the most policed in our nation,” Hayes mentioned. “And so it’s completely potential to construct transparency, however that’s a selection that [tech companies] aren’t making.”

Hayes mentioned it’s vital to cease conceding to that time, however generally that’s simpler mentioned than finished as a result of so many Black individuals don’t come from tech backgrounds or have experience in AI.

“So when tech corporations say, ‘Oh, these things is just too sophisticated to elucidate,’ we are likely to imagine them,” Hayes added, explaining that these firms usually exist in an surroundings the place they get to play by their open guidelines and don’t really feel any duty to adjust to authorized necessities and ignore public criticism.

Hayes is correct.

AI techniques closely depend on huge datasets to be taught patterns and make predictions. Sadly, historic knowledge is commonly plagued with biases and has components of systemic racism baked inside. Additionally they require the assistance of people, who can inherently insert their very own biases into AI algorithms and programming.

If the info used to coach AI algorithms disproportionately represents destructive stereotypes or discriminatory practices, the ensuing fashions can perpetuate and amplify these biases. That is harmful as a result of it could actually create the proper breeding floor for anti-Blackness, resulting in unfair remedy and discrimination in opposition to Black people in varied domains, comparable to prison justice, employment and lending.

The historical past of AI and its entanglement with anti-Blackness reveals the pressing want for essential examination, transparency, and moral concerns inside the improvement and deployment of AI techniques. Addressing biases and making certain equitable outcomes are essential steps towards constructing a future the place expertise works to dismantle, quite than reinforce systemic discrimination.

Missouri Congressman Rev. Emanuel Cleaver, II, who co-hosted the panel dialogue with Rep. Lee, referred to as AI expertise “virtually limitless” and mentioned “we don’t know” the place it can find yourself.

“If we’re not pushing it or concerned in it, we are going to get run over by it,” Cleaver, 78, warned whereas utilizing an anecdote concerning the iPhone’s autocorrect characteristic attempting to foretell what he would kind in a textual content message to his granddaughter.

Cleaver mentioned that the reality about AI extends to the monetary sector, too.

“Now we have to be in on it as a result of additionally once you begin speaking about utilizing completely different types of wealth to do your banking,” Cleaver continued. “AI is making choices about mortgages to determine who can get one.”

So what’s the answer transferring ahead? The panel dialogue solely lasted 90 minutes, which wasn’t almost sufficient time to place an motion plan into impact. However native assets are key to informing Black communities about AI and what to do subsequent as tech corporations more and more resign supreme in society.

“A lot of the data that we find out about AI proper now is definitely coming so much from grassroots communities which can be pulling collectively their capability of assets to work with researchers to make this expertise extra accessible and extra clear to our communities,” Hayes mentioned.

SEE ALSO:

Google’s AI Product Writing Information Articles Comes After Variety Flub Complicated Luther Vandross And Grasp P

The Energy Of Self, Black Wealth And The Future Of AI

10 images